Jupyter is a dining hall tray

Analytics is outgrowing Jupyter. Let's talk about what ought to come next.

👋 Hello! I’m Robert, CPO of Hyperquery and former data scientist. Welcome to Win With Data, where we talk weekly about maximizing the impact of data. As always, find me on LinkedIn or Twitter — I’m always happy to chat. 🙂

When it snows, the steps of Harvard's Widener Library transform into an unrivaled sledding surface—you just have to duck the chain on the way down. As more people slide down, the snow grows increasingly compact, plastering the steps and slicking the path until the stairs vanish beneath a perfectly laminar sheet of ice, sloped at a satisfying 30% grade.

And here, dining hall trays made the perfect sleds. They were flat, so they didn’t crack on the ice. Because of their low profile, it was simpler to duck the chain at the end. And casual theft aside, they were free.

One day, I came across a proper sledding hill for the first time. I eagerly brought a dining hall tray along, and I learned that, as it turns out, trays make terrible sleds under normal circumstances. They sink, they pile up snow, they lack a steering mechanism.

But of course, this isn't a post about sledding or things I did before my prefrontal cortex fully developed. It's a post about Jupyter. And Jupyter is a dining hall tray. Now that we're sledding on proper hills, we need sleds.

A walk through analytics history

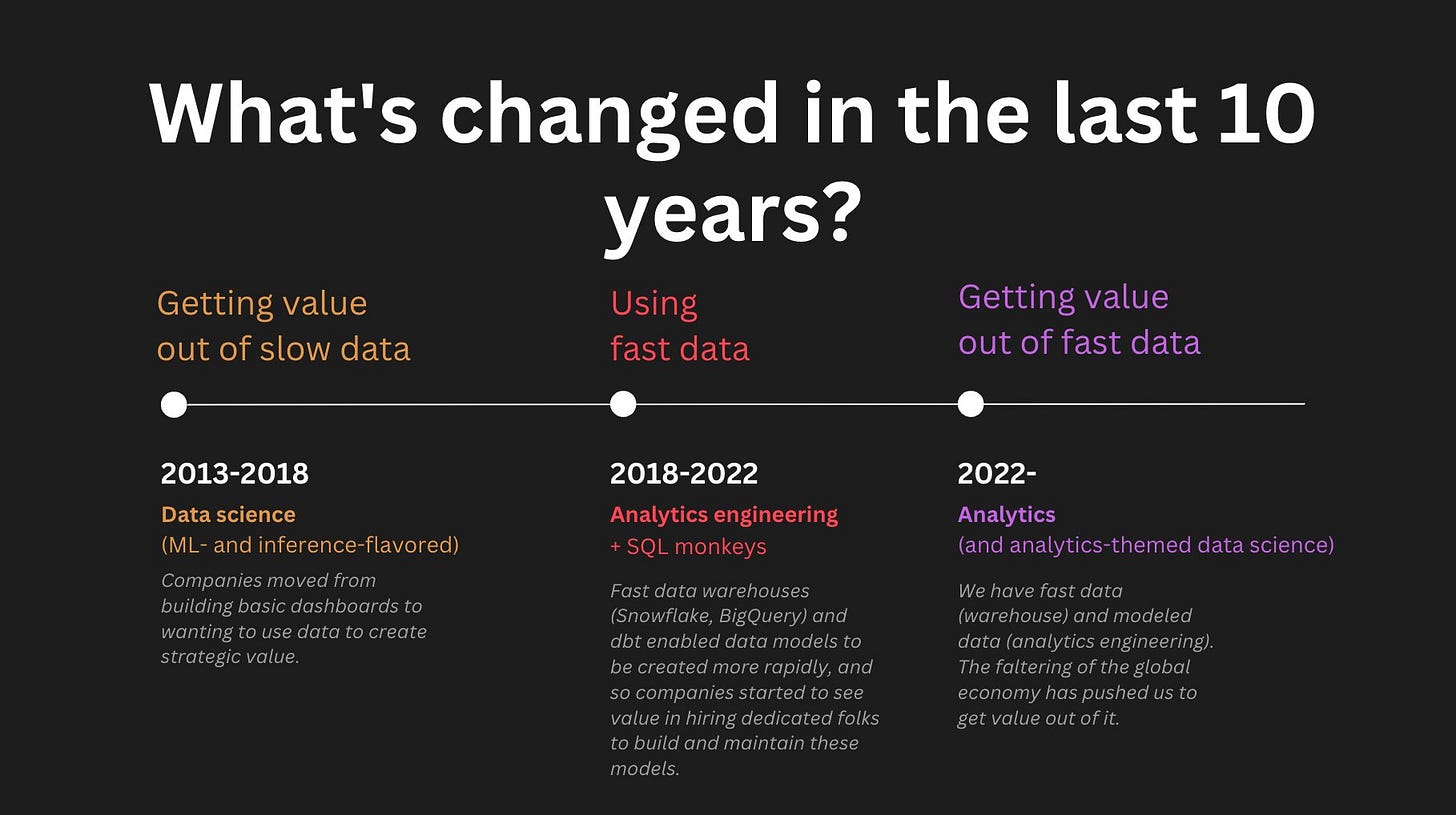

In the 2010s, analytics was different: it was all about dashboards, the SQL IDE, and Jupyter. We grew accustomed to this particular toolchain because it was optimal for our workflows at the time, but the conditions of our work have changed.

At the time, dashboards (and more generally, data products) took a lot of time to build and maintain, so we didn't have much time for exploratory analytics. Dashboards required the creation of pre-aggregated cubes to reduce query time and infrastructure complexity. They were production-grade objects that needed production-grade support. But because they provided a non-negotiable level of visibility into the business, organizations had to hire teams of folks to maintain them.

What little time we had left was spent on exploration. But because SQL was still so infuriatingly slow, it was generally best to save data to a CSV then explore it Jupyter. And so, Jupyter became the de facto tool for more technically deep exploratory analytics. Data Science became all the rage, and PhDs finally had viable career paths outside of academia.

Why Jupyter is a dining hall tray

With the rise of the modern data storage and access mechanisms (warehouses + data lakes), times have changed. Data products have never been easier to create and productionize. And once a core set of dashboards are erected, the work that remains entails discovery of deeper insights that can advance the business. Queries are an order of magnitude faster than they once were, and it's often more efficient (not to mention, more reproducible) to iterate on work directly in SQL than it is to download the results. And so analytics has new legs. Value comes not from repeatedly torturing the same data set, but from successive hypothesis-testing, lightweight querying, iterative traversal through the data warehouse.

We've entered the era of exploratory analytics, and, as much as I love Jupyter, it's not built for this world. It's first and foremost clunky and overbearing for SQL workflows. But what’s more, in the warehouse-surfing we do, Jupyter discourages stakeholders involvement—the cardinal sin of analytics.

So what's a sled?

What does the optimal surface look like for exploratory analytics, if it's not Jupyter?

We need a notebook that's ergonomic not only for technical work, but also creation and consumption.

Analytics work isn't purely technical. There are processes around the technical (SQL + Python work)—alignment on the business value beforehand, and interpreting and sharing the findings after.

Exploratory analytics needs can be understood through two eigenquestions:

How should our work be done?

How should our work be delivered?

How work should be done

Work should still be done in a notebook, absolutely. We iterate, increasingly so. IDEs are not optimized for iteration, end of story.

Queries are fast, and consequently, we spend a lot less time carefully crafting a single hit against our warehouse, and much more time iteratively running queries. We throw caution to the wind and run and re-run select * limit 1 as many times as we need.1

This was the initial appeal behind the Jupyter notebook. All we need now is something SQL-enabled so we can throw out our pyodbc boilerplate.

How work should be delivered

Unfortunately, where Jupyter shines in technical work, it falls short in delivery. For exploratory analytics, the end product is not a dashboard (even if the work ultimately leads to the creation of a dashboard). It's the analysis—a clear narrative that interleaves raw data with interpretation towards an objective. The deliverable is knowledge, not data. Analyses, not dashboards. And as such, this work needs to be delivered in a way that makes it easy and natural for others to follow along. It needs to be approachable, streamlined for comprehension.

And Jupyter is horrible at this. It’s opaque, and not only because of its hidden states, but because of its inherent technical complexity, which is a substantial hindrance for our less technical counterparts. Handing someone a Jupyter notebook is like handing someone a calculator—it’s a computational environment through and through, and no amount of UI polish can save it from this perception.

The rise of the notebook

Sometimes it’s difficult to disentangle my own beliefs from what I need to be true for my company to succeed. But even with that bias on the table, on this bet, I’m steadfast.

We need a notebook with the rich technical capabilities of Jupyter, but more well-suited to the inter-disciplinary nature of analytics. A notebook with a low floor and a high ceiling. A tool purpose-built for analyses in the same way the dashboard was built for metrics.

The world is ready for a proper analytics notebook.

Well, I’d recommend you don’t actually do this.