How poor stakeholder management ruins analytics

Why taking ownership of the analyst-stakeholder interface can instantly make your life better and your work more impactful.

👋 Hello! I’m Robert, CPO of Hyperquery and former data scientist + analyst. Welcome to Win With Data, where we talk weekly about maximizing the impact of data. As always, find me on LinkedIn or Twitter — I’m always happy to chat. And if you enjoyed this post, I’d appreciate a follow/like/share. 🙂

You’ve just started a new role as an analyst. You’re excited to get insider access to data about, say, cat furniture — an industry you’re deeply passionate about. Your first request comes in: an executive wants to know how many kinds of litter boxes they carry. Eager to please, you pull the data. “Perfect! Could you also pull the number of cat beds?” You realize it’d be more efficient to just create a dashboard for her, so you make one. The executive is ecstatic, and you feel like you’ve done well, advancing the data-driven credo.

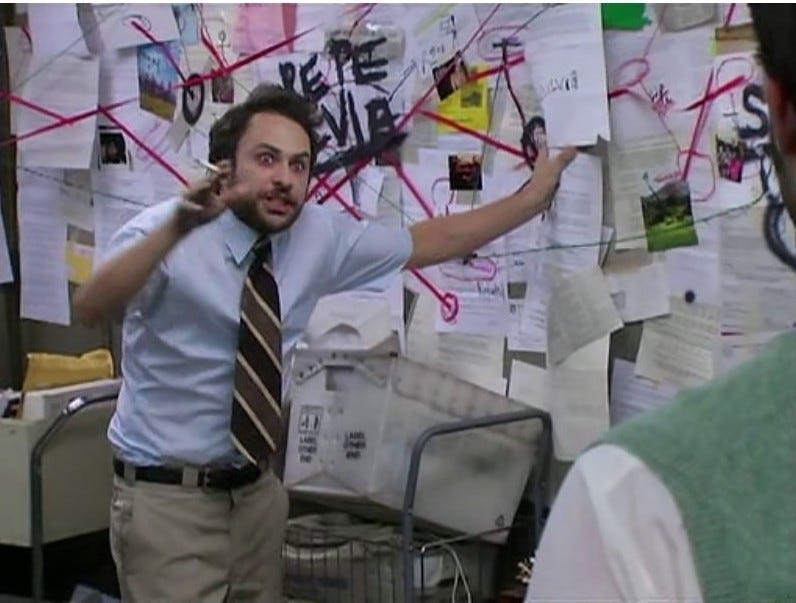

Later, you find out that those numbers were used to support an argument about which categories should be put on the homepage. You recoil. Those numbers were not meant to be used in that way, and you can think of a dozen other ways to come up with more optimal categories. You pulled those numbers for … well, you never knew why, but you didn’t think it was for that. You see the executive’s write-up on the initiative, and you realize your data’s been co-opted for mad libs analytics. There they are — your data — sitting alongside tangential arguments in a nonsensical way. You’re uncomfortable with the lack of logical follow-through, so you protest to the executive, but she assures you it’s fine.

“I just needed to support my arguments, and no one will look that closely anyway”.

You sigh, and you go back to building more dashboards. This time around, you focus instead on what you can control — data quality initiatives, building more elegant data models, optimizing your queries.

I imagine that most of you, like me, have lived this story again and again. And it seems intractable because the problems seem so external — we walk away from these transactions feeling like stakeholders simply don’t understand how data works. We attribute the problems to a broken corporate culture, and we resign ourselves to finding impact in some other way — at least, until we find our next role at a company that’s more “data-driven”.

But in this post, I want to directly talk about how to improve this situation, and thereby improve our quality of life as analysts by breaking this cycle. In particular, I’ll argue that our two failings at the heart of the story above are:

Our failure to take ownership of how our data is used.

A poor understanding of how decision-making happens.

Now, let’s talk about each of these issues.

Problem 1: we need to take more ownership of how our data is used.

Analytics is a Dunning-Kruger attractor: most people think they understand how to interpret data, but very few folks do it well. After all, the pitfalls are numerous. This dichotomy is a core problem in our industry, and I imagine it’s why we’re often undervalued, misunderstood, and the first out the door when executives trim the fat.

And unfortunately, we analysts enable this delusion by simply being who we are: researchers, scientists, hermits — likes: thinking, flow, math, rigor; dislikes: answering ad hoc requests, persuasion. When a colleague dares us to ask us for help, at best, we’ll concoct brilliant analyses, then throw the esoteric results in the general direction of the people that actually need it. At worst, we’ll send back raw data without offering any interpretation, leaving our stakeholders to (erroneously) navigate bias themselves.

And that’s the problem. This kind of behavior betrays our misunderstanding of how analytics should operate: the onus should not be on others to make our work useful — it must be on us. I’ve spoken before about how analytics is an interfacial discipline, but a repercussion of this is that we must take some level of ownership of the interface.

Consider any other interfacial discipline and you’ll see that the most effective ICs operate in this way. Great designers, for instance, build with engineering considerations in mind. Great engineers scrutinize product requirements docs and work closely with designers and product managers. Customer service representatives and salespeople meet customers where they are — imagine how effective a salesperson would be if they waited for their potential customers to contact them.

But that’s what we do in analytics. We operate like a service organization, rather than thought partners, and it’s no wonder that’s how we’re ultimately treated. And I think as an industry we know this down in our hearts. We’ve collectively dreamed up whole libraries of operational wisdom over the last few years, all of which seems to point to a common root cause: we aren’t taking ownership of the interface:

We should be running our teams like product teams.

… because product teams are always thinking about the customer, just as we ought to care deeply about how our analyses are used — the interface.We should be focusing less on technical work.

… because technical work is half the battle. The delivery — the interface — is the other half.

We should be obsessed with providing interpretation through analyses, not just raw data through dashboards.

… because the stakeholder-analyst interface is mediated through interpretation.We need to build truth-seeking ecosystems.

… which is, again, a mental model for understanding what the stakeholder interface should look like.

It’s therefore in your best interest to try to take ownership of how your work is used. Ask for access, join meetings, come out of your hermitage for a moment, and share your renewed philosophy with your stakeholders. It’s a gateway to both your impact and theirs.

Problem 2: we don’t know how decision-making works

So of course, I’d wager that most of us do try to manage our stakeholders with earnest. But still, we flounder, and that brings me to the second big thing getting in our way: we don’t know how to be involved in the decision-making process. Some of us take on the mantle of pedantry, advancing rigor for rigor’s sake. Other times, we sit quietly in the corner waiting to be called on. The problem? We don’t know how decision-making works, and thus don’t know how to usefully inject ourselves into that process. So let’s talk about how decision-making works.

I used to be a hardcore analyst, but over the last few years, I’ve had the wonderful opportunity to run product at Hyperquery. And while you might think building a data tool is not so different from doing data work, I’ve felt this immense cognitive dissonance against how I used to operate as an analyst. Where once my world was entirely quantitative, I suddenly found myself making decisions where I only really had qualitative data at my disposal. And so I adapted — I’ve steadily learned to sculpt reasonable decisions from highly ambiguous clay. But throughout this whole experience, my most staggering revelation was this: in the last three years, no decision I’ve made was entirely predicated on the results of a SQL query.

Even with data easily available to me, and even with data in my veins, the truth is: data is just never the main thing. And from this, I’ve come to the stark realization that we, as data practitioners, have a fundamental misunderstanding of data’s role in decision-making. Plain and simple: it just isn’t important in the ways that I thought it was. That’s not to say it’s not important, just that it’s not the infallible, objective representation of Truth I once deluded myself into thinking it was.

Data is not the whole story. Data is a data point.

That’s not to say it’s not useful — data can be incredibly powerful in its own right. It doesn’t tell you to do something, but it can close off forks in the road. It’s never tantamount to a decision, but it can act as an accelerant to one. It can’t tell you what to do, but it can speak for your customers when they themselves stay silent. It isn’t the answer, but it can simplify understanding and provide clarity that makes it easier to see an answer. Data has helped me navigate ambiguity in the same way that qualitative data does. I see the data, I adjust my priors accordingly, and I walk down a different route of the idea maze. And in that capacity, it’s been invaluable.

But it’s a characterization of data that is wholly different from how I understood data as a data practitioner. When your entire world is data, it’s easy to think that’s all there is. But it’s important to understand the role of our work so we can better fit into decision-making conversations. We should never force decisions, but increasing our contextual understanding can help us make useful recommendations. You’re sitting in the passenger seat, and if you know where you’re going, you can help route there.

Final comments

Now I know that all that seems easier said than done — I’m certain the ambiguity of my advice thus far is your biggest blocker at this point. To make this a bit clearer, let’s reconsider the story from the start of this post if things went a little differently. Once again, your stakeholder wants to know how many kinds of litter boxes you carry. Instead of jumping to a query, you ask why. It turns out the executive wants to pick some categories for the homepage. You offer suggestions around recommendation systems, but she complains that’s too much. Realizing she has a tight timeline for delivery, you tell her you’ll build a quick dashboard so you can look at the data together, then make a coherent decision based on that information. She agrees. You pull additional metrics on top of sheer count she initially requested — clickthrough rate, order rate, average ratings. You even define a new metric: % of low exposure items. You share the data with your stakeholder, alongside your recommendations.

She pushes back, of course. But instead of pushing back in turn, you try to understand the basis of your stakeholder’s objections to reach an optimal decision. From a deep discussion, together, you form hypotheses that blend your quantitative findings and her qualitative intuition, and these become the basis of robust category creation. You write up an analysis that’s linked in her product strategy document. The choices she made are vastly improved, and you feel like you’ve genuinely changed the arc of cat furniture history.

It’s a fundamentally different story than the one we started with, but its success was predicated on only two small changes:

You took ownership of how the data was going to be used by asking why.

You blended data into her intuition, rather than trying to override it. This was possible because you had a clear understanding of how data should be involved in the decision-making process.

We have a proclivity towards dumping data on stakeholders, leaving the synthesis to them. The best analysts, though, go a step beyond, and dive headfirst into the qualitative data as well, deeply understanding the objective they’re trying to accomplish. They own the responsibility of melding their insights into the decision-making process. They understand the objective function: an intellectually honest decision. And so they operate in a way that seeks to get there, rather than simply accomplishing what is asked of them, resigning themselves to patterns of data access that their stakeholders have fallen into, and sitting quietly in a room of executives until they’re called upon.

I hope you’re convinced that the behavioral changes I’m proposing aren’t so drastic. I’m sure you’ve heard that voice telling you to involve yourself more deeply — “maybe I should ask why this data is needed” — but eh, you decide you don’t have time. Well, next time, just listen to that voice.

I really love this post. We've had a bunch of consulting clients in the past where we've given them a huge amount of high-quality data and they've made judgements which made very little sense. I like the emphasis on building the systems, interpretation, and truth-seeking systems.

Adding this to my recommended list 🙂

Thank you for this! :) It’s a great shift in mindset. I now understand how to be more useful to everyone with the data I handle. Thank you!